Tackling profiling for mobile games with Unity and Arm

Learn how to take on mobile performance issues with profiling tools from Unity and Arm. Go in depth on how to profile with Unity, how to optimize performance drop offs and tips and tricks on getting the most out of your game assets.

In this blog, we examine how to identify performance problems in a mobile game through the use of profiling tools from Unity and Arm. We also introduce best practices for optimizing mobile game content.

In order to identify performance problems in your game, you should first test it on a range of different devices. The best way to do this is to capture a performance profile on a real device. Tools such as the Unity Profiler and Frame Debugger can provide you with great insight into where elements of your game are taking their resources. Additionally, tools like Arm Mobile Studio enable you to capture performance counter activity data from the device, so you can see exactly how your game is using the CPU and GPU resources. While the device we used has a Mali GPU, the concepts introduced here also apply to other mobile GPUs.

Test example

The game we are testing is an action RPG, where the player must fight waves of incoming enemy NPCs with melee and spell attacks. This type of game can quickly become GPU bound on a mobile device, with increasing numbers of foes on screen as well as multiple particle and post-processing visual effects.

Profiling with Unity Profiler and Frame Debugger

We ran the game through the Unity Profiler to identify any slowdown in performance. We found a few high-priority suspects, post-processing, and fixed Timestep and instantiation spikes.

Post-Processing

The post-processing effects were a central cause of the game’s poor CPU performance.

Of all the post-processing effects, the bloom pass, which makes bright areas in the scene glow, was the most taxing.

In the screenshot above, you can see that the Render Camera is taking a huge amount of time and crosses the frame boundary. The main thread then waits until the rendering commands are complete before preparing the next frame. Let’s look at the Unity Frame Debugger to figure out what is going on.

The first thing to notice in the Frame Debugger is that the game is being rendered at the device’s full screen resolution. For an average mobile device, this puts undue pressure on the device’s GPU, given the complexity of the content. Reducing the resolution to something more reasonable like 1080p or even 720p would significantly reduce the costs of rendering the game, especially the post-processing effects.

The next point of observation is that the bloom effect occurs in 25 draw calls for the bloom pyramid. Each draw call represents a target buffer with a size starting at half the resolution of the fullscreen device resolution. This resolution is then halved with every iteration. Reducing the initial rendering resolution is one way to reduce the potential number of iterations. Another alternative would be to modify the bloom effect source code to reduce the number of iterations taking place, and impose some sensible limit. However, in this case, it would be better to disable the post-processing effects for now, due to the considerable amount of time it takes to handle those effects. That is, at least until the rest of the game can be made to run smoothly at 30 frames per second.

Fixed Timestep

Another improvement for the project would be to reduce the frequency of the fixed Timestep interval. We can see that it is currently short enough to be called multiple times a frame; by default, Unity sets this to 0.02 or 50Hz. You can try a fixed Timestep value of 0.04 for mobile titles aiming at 30 fps. The reason for this is because, at 0.333, which would match 30 fps, there is the chance that one frame spikes in time and you end up with two calls in the next frame. This means that it takes longer – and you can never break the cycle of a slightly longer frame. The user can also set the maximum allowed timestep to prevent any catch up from taking more than the desired amount of time.

This Timestep duration affects scripts using the FixedUpdate function and any Unity internal systems that update on the fixed update interval, for example, physics and animation.

For the purposes of this project, only physics and Cinemachine contributed heavily to the time taken, around 3ms per call; a call meaning that the system was entirely updated (though being called an additional 5 times meant that this could add up to 15ms per frame of wasted time).

This occurs due to the slow post-processing effects. Turning them off reduces the time spent, however, the earlier recommendation of reducing the fixed Timestep frequency to avoid unnecessary work for the CPU still stands.

Instantiation spikes

During profiling, spikes could be seen in the frame time. Tracking them down in the CPU profiler hierarchy view shows that they stem from the instantiation of NPCs.

The most common solution for this is to instantiate the characters ahead of time and keep them in an idle state, in some sort of object pool. These NPCs can then be grabbed from the pool at no instantiation cost. If more are needed, then the pool can be expanded as required.

The same issue is also seen when abilities are being used, as they are also instantiating objects.

Object pooling is the easiest way to solve these problems. It may affect loading times, but allows for a much smoother frame rate at runtime, which is the lesser of two evils in this case.

Profiling with Arm Mobile Studio

We’ve also used Arm Mobile Studio to gain more insight into the game’s behavior. With the tools in Mobile Studio, we can get performance counteractivity data for the CPU and GPU, so we can see exactly how the game is using the device’s resources.

You can download Arm Mobile Studio for free here. There are 4 tools included:

- Performance Advisor – to generate easy-to-read reports and get optimization advice

- Streamline – a comprehensive performance profiler to capture all the counter activity

- Mali Offline Compiler – to check how a shader program would perform on a Mali GPU

- Graphics Analyzer – to debug graphics API calls and analyze how content was rendered

Performance Advisor

Performance Advisor provides us with a quick summary of game performance, and is intended to be used as a regular health check. It’s quick to generate a report, particularly if you build it into a continuous integration workflow, alongside your nightly build system. Performance Advisor provides us with a quick summary of game performance, and is intended to be used as a regular health check. It’s quick to generate a report, particularly if you build it into a continuous integration workflow, alongside your nightly build system.

During the first 2 minutes of the game, Performance Advisor tells us that we are only averaging 17 frames per second. The green section at the start of the frame rate analysis chart indicates where the game is loading, then suddenly, the chart turns blue, indicating that the game has become fragment bound, and it stays that way throughout. This means that the GPU in the device is struggling to process fragment workloads, which suggests that the game is either requesting too much work, or that it is not processing pixels efficiently.

As we’ve added region annotations to the game, the frame rate analysis chart shows our custom region names. Where the chart shows a marker labeled with ‘S,’ Performance Advisor has taken a screenshot of the game to help us identify what is happening on screen at that point. You can configure screen captures to be taken when the fps drops below a specified value. Here, because the fps stays low throughout, Performance Advisor takes a screenshot at the default interval of every 200 frames.

Take a look at the GPU cycles per frame chart, where we’ve added a budget of 28 million cycles per frame for this device. We’ve estimated that this is the maximum number of cycles that this device should be able to handle, while still achieving a frame rate of 30 fps. Here, we can see that the number of GPU cycles significantly exceeds this budget, and that the number of cycles increases over time.

Performance Advisor provides optimization advice when it finds a problem. If we look at the shader cycles per frame chart, we see that the number of execution engine cycles is high. Inside a Mali shader core, the execution engine is responsible for processing arithmetic operations. Performance Advisor has flagged this as a problem and advises us to reduce computation in shaders.

There is a simple fix for this. You can reduce the precision of shader variables to mediump, rather than highp, with no noticeable change on-screen. This will significantly reduce shader cost. For information on how to do this, refer to Shader data types and precision in our documentation. Additionally, as we discovered earlier with Unity’s Frame Debugger, the game is currently rendering to the device’s full screen resolution. Any changes we make to reduce the game’s rendering resolution (to 1080p or 720p) will also reduce the fragment shading cost.

Content metrics

We had set a budget of 500,000 vertices per frame for this device. The budget is exceeded around 45 seconds in and the number increases steadily over time.

Looking at the primitives per frame chart, we notice that the total number of primitives being processed increases over time, even though the number of visible primitives stays relatively constant. In the first 2 minutes of the game, the only new objects that are created are the enemy NPCs, which then get destroyed in a blast of lightning by our hero. This suggests that when the enemies are destroyed, their geometry is still present, even though it is not visible.

There are several reasons why the GPU may not be able to handle the game’s demands, so we need to explore Arm’s profiling tool further with Streamline. Streamline will tell us more about this heavy fragment workload, and by looking at the other counters, we can find clues on how to lighten the load.

Streamline analysis

Looking at the same section of the game in Streamline, we can explore a range of charts that show the GPU counter activity for the different stages of geometry and pixel processing. This illustrates how the game’s content is processed by the GPU, and whether there is unnecessary processing.

Mali-based GPUs take a tile-based approach to processing graphics workloads, where the screen space is split up into tiles, and each tile is processed to completion in order. For each tile, geometry processing executes first, then the pixels are colored in during pixel processing.

We already know that the GPU in the device is maxed out with fragment workloads, so we need to look for ways to reduce pressure on the pixel processing stage.

One way to reduce the pixel processing load is to lower the complexity of the geometry that gets sent for pixel processing in the first place. Geometry that is completely off screen or backfacing is killed before pixel processing, but small triangles which only partially cover 2×2 pixel quads can erode fragment efficiency and have high bandwidth cost per output pixel.

The Mali Geometry Usage and Mali Geometry Culling Rate charts in Streamline show how efficiently the GPU processes geometry. We can see the number of primitives being sent to the GPU, and how many of them are culled during geometry processing. Work that is culled at this stage won’t get passed through to pixel processing. This is good news, but we could organize the content more efficiently, so that non-visible primitives aren’t passed through at all.

In the Mali Geometry Usage chart, we can see that , 1.07 million primitives enter geometry processing (orange line) in the selected timeframe (about 0.05 seconds), but 700,000 primitives are culled at this stage (red line).

The Mali Geometry Culling Rate chart shows why they are culled. Around half are culled by the facing test (orange line), which is expected, as these are the backfacing triangles of our 3D objects. What is more concerning is that 31.9% of primitives are culled by the sample test (purple line) – ideally, this number should be less than 5%. The sample test indicates that these primitives were too small to be rasterized, failing to hit a single sample point, and therefore, considered invisible. This can happen when objects with complex meshes are positioned far away from the camera, and triangles in the mesh are too small to be visible. Higher numbers could indicate that the game object meshes are too complex for their position on screen.

This problem gets worse for primitives that are big enough to pass the sample test but still only cover a few pixels. These ‘microtriangles’ are passed through to pixel processing and are expensive to process. This is because, during fragment shading, triangles are rasterized into two-by-two pixel patches, called quads. Tiny triangles only hit a subset of the pixels inside a quad, yet the whole quad must be sent for processing. This means that the fragment shader will run with idle lanes in the hardware, making shader execution less efficient.

To check whether we have a problem with microtriangles, we can use the Mali Core Workload Property chart in Streamline to monitor the efficiency of coverage. Ideally, this should be less than 10%. We can see here that in some sections, the partial coverage rate (green line) is very high, over 70%. This value suggests that the content has a high density of microtriangles, which confirms the issue that was flagged earlier by the high sample culling rate.

Geometry that does end up on screen needs to be appropriately sized for its position. A complex piece of scenery that is far away does not need to be very detailed, as it does not contribute much to the scene. We could use Level Of Detail (LOD) Meshes for objects that are further away from the camera, to reduce complexity and save processing power and DRAM bandwidth. Or, instead of using geometry, we could use textures and normal maps to build surface details for objects.

Analyzing shaders

Through the Performance Advisor report, we discovered that our shaders could be too expensive, and that we could benefit from reducing their precision. In Streamline, we can use the Mali varying usage chart to see the number of cycles where 32-bit (high precision) or 16-bit (medium precision) interpolation is active. Here, we can see that 32-bit interpolation is used in most cycles. 16-bit variables interpolate twice as fast as 32-bit variables, and use half the space in shader registers to store interpolation results, so it is recommended to use mediump (16-bit) varying inputs to fragment shaders whenever possible.

Shader analysis with Mali Offline Compiler

To explore shaders, we can use Arm Mobile Studio’s static offline compiler tool, to generate a quick analysis of the shader program.

To do this, you need to grab the shader code from the compiled file that Unity gives you, then run Mali Offline Compiler on that file:

- In Unity, select the shader you want to analyze, either directly from your assets folder, or by selecting a material, clicking the gear icon and choosing Select shader.

- Choose Compile and show code in the Inspector. The compiled shader code will open in your default code editor. This file contains several shader code variants.

- Copy either a vertex or fragment shader variant from this file into a new file, and give it an extension of either .vert or .frag. Vertex shaders start with #ifdef VERTEX and fragment shaders start with #ifdef FRAGMENT. They end with their respective #endif. (Don’t include the #ifdef and #endif statements in the new file).

- In a command terminal, run Mali Offline Compiler on this file, specifying the GPU you want to test. For example: malioc –c Mali-G72 myshader.frag Refer to Getting started with Mali Offline Compiler for more instructions.

We chose to analyze the fragment shader that was responsible for the dissolve effect that occurs when the enemy NPCs die. Here is the Mali Offline Compiler report, with highlighted sections of interest:

We can see that only 2% of arithmetic computation is done efficiently at 16-bit precision. The shader will operate more efficiently if we reduce precision from highp to mediump. This reduces both energy consumption and register pressure, and can double the performance. There are situations where highp is always required, such as for position and depth calculations, but in many cases there is little noticeable difference on-screen when reducing precision to mediump.

The report provides an approximate cycle cost breakdown for the major functional units in the Mali shader core. Here, we can see that the arithmetic unit is the most heavily used.

In the shader properties section, we see that this shader contains uniform computation that depends only on literal constants or uniform values. This produces the same result for every thread in a draw call or compute dispatch. Ideally, this kind of uniform computation should be moved into application logic on the CPU.

We can also see that the shader can modify the fragment coverage mask that determines which sample points in each pixel are covered by a fragment, using the discard statement to drop fragments below an alpha threshold. Shaders with modifiable coverage must use a late-ZS update, which can reduce efficiency of early ZS testing and fragment scheduling for later fragments at the same coordinate. You should minimize the use of discard statements and alpha-to-coverage in fragment shaders where possible. Refer to the Arm Mali Best Practices guide for advice on using discard statements.

Graphics API call analysis with Graphics Analyzer

In Arm Mobile Studio’s Graphics Analyzer, you can see all the graphics API calls that the application made, and step through them one by one, to see how the scene is built. This helps to identify objects that are too complex for their on-screen size and distance from the camera. Here are a few examples we found in this game:

The brickwork over in the far corner of the scene is built with geometry and uses 2064 vertices. The detail is not extremely visible in the final output, so this is wasted processing.

We found the same issue for the floor tiles – these are 1170 vertices each, but even though the object is close to the camera, the scene does not really benefit from this complexity. It would be more efficient to use a normal map here, to represent the bumps and angular edges rather than building it with triangles. Additionally, we can see that these objects are drawn using separate draw calls. Reducing the number of draw calls by batching objects together or using object instancing could increase performance.

Another example is the statues at the back of the scene – 6966 vertices each. You can see that the mesh is quite complex, which will give a great visual result when the player gets close to the statues, but from this camera position, they are hardly noticeable. It would save a lot of processing power to use Mesh LODs here to represent these objects when they are this far away from the camera.

Remember that reducing complexity for many similar objects adds up to a huge saving in geometry processing, which subsequently reduces the amount of fragment shading required. Not only will this bring the fragment workload down and increase our frames per second, it will also reduce the install footprint of the APK.

Game optimizations

We’ve uncovered several areas where we could make changes to the game to improve performance. Here are the ones we chose to implement, and how we did it.

Fixed Timestep

Fixed Timestep is a frame rate-independent interval that controls when physics calculations and FixedUpdate() events are performed. By default, this is set to run at 50 fps. While 50 or even 60 fps is sustainable on high-end mobile devices, more mainstream devices run at 30 fps, which this title is targeting. Go into Edit > Project Settings, and then into the Time Category, to set the Fixed Timestep property to 0.04. This will ensure that your physics calculations, FixedUpdate(), and updates are all running in sync.

After the adjustments were made to the fixed Timestep in Unity, the fixed update portion of the main game loop was only called once per frame, for an average of 1.5ms. This is a huge improvement from the 12ms that it had taken previously – anda simple solution to a common performance pitfall.

Resources Folder

At the startup of the app, data for all objects referenced by built-in scenes or in the Resources folder are loaded into the Instance ID cache. These assets are treated like one big asset bundle, so there is metadata and indexing information that is always loaded into memory. Once an asset from this bundle is used, it can never be unloaded from memory.

The recommended method for handling assets and resources when aiming to improve your memory consumption is through the Addressable Asset System, which provides an efficient way to unload content that’s no longer needed from memory.

GPU instancing

In our environment, we have many objects that appear multiple times. Walls, floor tiles and other environment props are all duplicated to build out this scene. We can save draw calls by enabling GPU instancing on the objects’ material. GPU instancing renders identical meshes with a small number of draw calls, and allows each instance to have different parameters, such as color or scale. This modification can add an uplift to CPU performance. Below, you can see Performance Advisor data before GPU instancing was enabled.

And here, you can see the same portion of the application, but with GPU instancing enabled – a small but measurable gain toward our target of 30 fps.

Render textures

Render textures are a way of adding 3D elements to your UI, as well as many other use cases. If you have a camera rendering to the render texture, be sure to disable the camera when it’s not onscreen. There is no need to render something that the user won’t see. Use Graphics Analyzer or Unity’s Frame Debugger to make sure that these textures are not being updated offscreen.

Object pooling

Rather than putting extra work on the CPU by creating and destroying the same objects over and over, try object pooling. Object pooling is a design pattern that prompts you to create the objects you will need up front, front loading the work of the CPU. Then, rather than destroying them, you can add them back to the pool to be reused when an object of the same type is needed again. This is a fantastic way to relieve the processing power of the CPU, so it can work freely on more important tasks for your game.

With the move to object pooling, there is no spike attached to the waves of enemies appearing onscreen that can be identified in the Unity Profiler captures, as well as no discernible effect on the frame rate.

Level Of Detail (LOD) meshes

When a Mesh is onscreen, the GPU spends time rendering all of the triangles in the mesh, no matter how small. In games where your camera or assets can move, this often creates a situation where you can spend a lot of the GPUs resources rendering triangles of meshes that are too small to be seen in the frame. To address this, use Level Of Detail (LOD) Meshes . This lets your game leverage less complex meshes as the camera moves away from the assets, which decreases the amount of mesh complexity that the GPU must render and reduces the vertex count per frame, giving larger triangles to pixel processing. Not only does this improve efficiency, it keeps the artistic integrity of the scene intact.

For other asset optimization tips, be sure to check out the Game Artist Guides from Arm.

Texture Atlases

When you know that some assets with the same material properties will be used in the same scene, you can batch them together. Combine their texture data into a single texture atlas, which will save draw calls by drawing them at once, and result in a smaller footprint when compressed, compared to multiple separate files.

Float vs Half Shader precision

When writing your own custom shaders, or using Shader Graph, you can decide on what precision to use: float or half. Choosing half, wherever possible, will make for more performant shaders – but remember that you will likely need to use float for anything that deals with world-space positions or depth calculations!

Integrated vs Post Processing V2 feature sets

When you start to plan the post-process effects for your project, you have two options to choose from: the legacy Integrated feature set, or the new Post Processing v2 feature set. Below, you can see the game using the Integrated feature set.

Every 3–4 frames, we see a spike in V-Sync, where the system is waiting on the frame to render. This causes the game to drop below 30 fps, consistently, and wastes power on the device. ere, however, you can see the game’s profiler data using the same effects, this time, with the Post Processing v2 feature set.

This profiler graph is much better, as Post Processing v2 is optimized to run on mobile hardware. Use it in your project to get the best post-processing performance.

Post-processing effects

Adding post-processing effects to your game can add a nice layer of polish and visual depth to your project. But it’s also important to balance these effects with performance. After all, these effects can get expensive. Turning these off on mass-market devices can save a lot of power, and stop a device from heating up in your players’ hands.

Once the other optimizations were in place, we could still see spiking in some areas. By using binary searching, turning things on and off, we eventually tracked down two things: One was the post-processing stack that was being used. This helped with the total time, but the frame rate eventually levelled out once we turned off anti-aliasing–so much so that some of the post-processing was able to stay on, even on the lowest spec devices we were using to test.

After optimizing the game, we ran it through Arm Mobile Studio again, to look for any differences. The Performance Advisor report now shows that we have achieved an average fps of 28.9 (previously 17), and reduced overall fragment boundness. Fragment activity is still high in some sections of the game, so we still have work to do, but with good data to guide our investigation, we should be able to optimize these sections to further improve performance.

The number of vertices per frame is now well under our 500,000 budget, and you can see regular dips where the enemy NPCs are destroyed.

Geometry usage and culling is now much more efficient, with the number of visible primitives at a much healthier percentage of the number of input primitives. The facing test is responsible for around 50% of culled primitives, as expected, and those killed by the sample test is below 10%, showing that we have reduced the number of very small triangles.

In summary

By using Unity’s Profiler and Frame Debugger, along with Arm Mobile Studio, we have been able to discover multiple ways to improve performance and reduce the pressure on the CPU and GPU on a mobile device. Some of the problems we uncovered could be avoided in future titles, by sticking to a set of best practices for content.

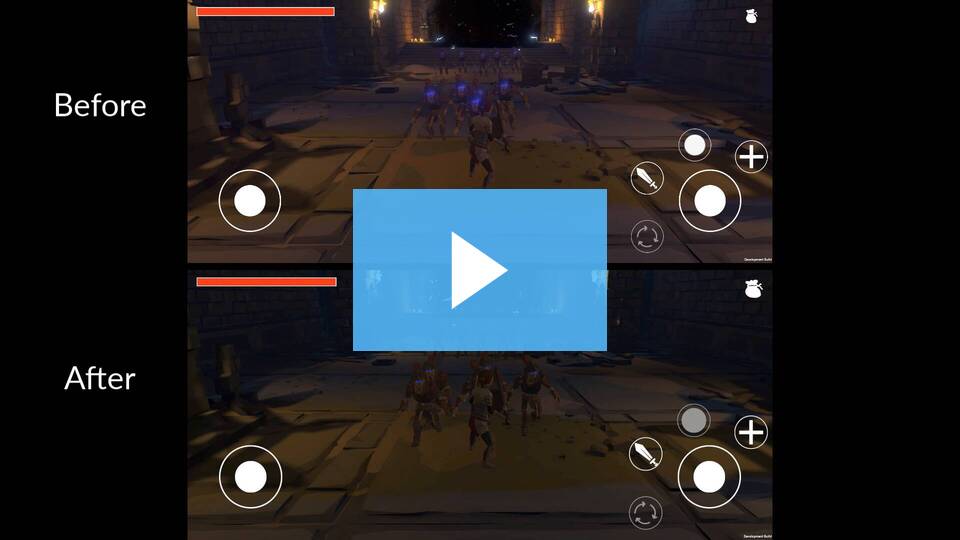

Of course, we don’t want optimizations to reduce the quality of the onscreen visuals. Here’s how the optimized version of the game looks beside the original version.

Proactive performance testing

Performance testing often happens quite late in the development cycle. It’s great to find further opportunities to optimize, but what if there’s no time to fix the issues before your release deadline? It’s much more practical to design content optimally to start with. It can be useful to set content budgets around mesh complexity, shader complexity and texture compression, to give your team the best chance to design efficiently for mobile. Here are some resources that could help your team:

- Arm guide for Unity developers

- Developer guides for best practices on Mali

- Unity learn course, 3D Art Optimization for Mobile Applications

Once you know that most of your application and assets follow a set of best practices, you can do regular performance testing throughout your development cycle, to catch any issues early enough to fix them.

Teams that use a continuous integration system can take advantage of automated performance testing, available with Arm Mobile Studio professional edition. This edition can run across multiple devices in a device farm, and takes the pain out of manual profiling. The reported data can even be fed into any JSON-compatible database, so that you can build visual dashboards and alerts to monitor how performance changes over time, to flag issues sooner.

Never tried profiling tools before?

Unity’s built-in profiler is a great place to start. Read about how to profile your application in the Unity documentation. Or, explore Frame Debugger, which lets you investigate how an individual frame is constructed.

Download Arm Mobile Studio for free from the Arm Developer website and check out the starter guides for Performance Advisor, Streamline, Mali Offline Compiler and Graphics Analyzer, to get up and running quickly.

Get in touch

For additional help profiling with Unity’s Profiler and Frame Debugger, please feel free to ask questions in our forum.

For further support while working with Mali devices or Arm Mobile Studio, go to Arm’s Graphics and Gaming Forum, where you can ask questions, and Arm will be happy to help.

Is this article helpful for you?

Thank you for your feedback!

- Unity Labs

- Copyright © 2024 Unity Technologies